Track 02 / NLP & Text

Entities, spans, intent, sentiment, moderation, preference labels. Domain-trained reviewers for legal, medical, and financial text. Classical NLP and LLM-era workflows under the same discipline.

Techniques

From NER and intent for classical pipelines to preference ranking and red-team prompts for LLM post-training — under the same review discipline.

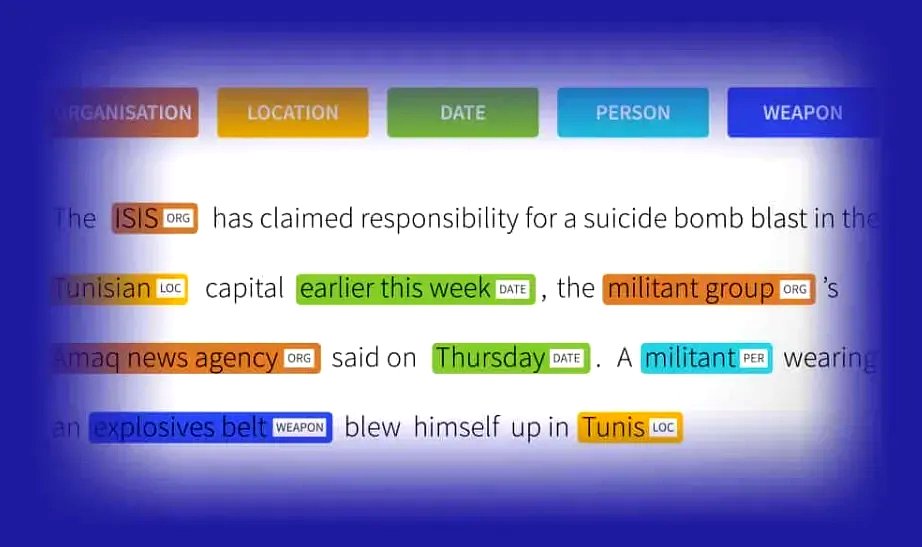

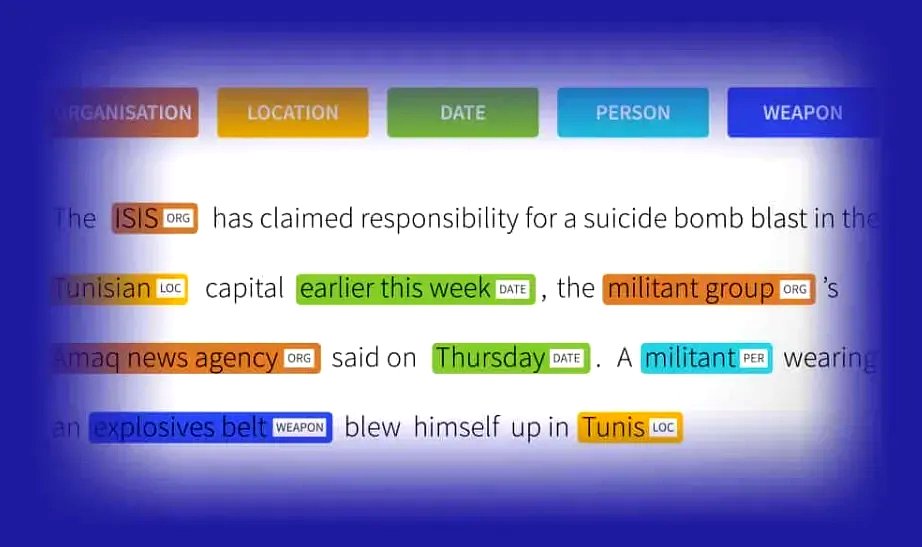

Span-level entity tagging under domain-specific ontologies. Off-the-shelf (PERSON, ORG, LOC, DATE) or bespoke — drug mentions, legal citations, financial instruments, threat categories.

Intent labels for CX routing, sentiment for feedback analytics. Multi-label support and schema-defined confidence bands rather than forced binary decisions.

Beyond flat entities — spans connected by typed relations for knowledge-graph construction, claim extraction, contract clause mapping. Tooling: Label Studio, Prodigy, Brat, V7 text.

Toxicity, harm categories, platform-specific content rules. Operators train on your policy document, not on a generic "what feels bad" heuristic. Escalation path for high-severity calls.

Response-pair preference labelling for LLM post-training — helpfulness, harmlessness, factuality. Every label carries a rubric-tied rationale so post-hoc drift is explicable.

Query-document relevance on graded scales. Calibrated against your relevance rubric so rerank model training isn’t chasing an operator’s personal sense of "relevant".

Where we’ve delivered

A legal span annotator is not labelling medical notes. Domain-trained reviewer pools keep judgment anchored.

Contract clause tagging, case-citation extraction, obligation & risk span labelling. Reviewer pool with legal training.

Clinical notes, drug mentions, condition / procedure tagging under reviewer oversight. PHI-safe environment.

Earnings transcripts, instrument extraction, regulatory filings. Multi-language support for EMEA & African exchanges.

Preference labels, red-team prompts, instruction quality, content policy. For foundation-model labs and LLM-app teams.

Schema specifics

Text schemas fail in predictable places. These are the surfaces most often worth tightening before a pilot runs clean.

Rules for when entities can nest (PERSON inside ORG) vs. when they must be flat.

Punctuation, possessives, hyphenation. A consistent boundary rule or drift is inevitable.

When multiple classes apply, which wins for single-label downstream tasks.

Explicit abstain class rather than forcing a decision — carries more signal.

Severity and reviewer confidence as separate axes for moderation workflows.

Constrained rationale codes so preference-label rationales cluster cleanly for analysis.

Questions we get

Yes — English plus selected African and European partner languages. The operator pool varies by language; we scope language coverage at kick-off.

Yes — pairwise and k-way preference, red-team prompts, instruction quality. Rubric-tied rationales rather than free-text so labels cluster for reward-model training.

Domain-trained reviewer pools with specific qualifications per engagement — verified at kick-off. Medical work runs under PHI-safe environment and supervision.

Yours. Label Studio, Prodigy, Brat, V7 text, Labelbox, Scale, or internal tools. We train to the platform you already run.

Yes — policy-document calibration, dual-axis severity/confidence, explicit escalation path for high-severity calls. Scope is set per engagement.

Other annotation tracks

NLP & text sits alongside two other tracks under the same QA discipline and the same operating model.

Scope with us

We scope operators, rubric work, and a written agreement threshold together — and run a pilot against a co-drafted rubric before you commit to steady-state volume.