Data annotation, at production scale.

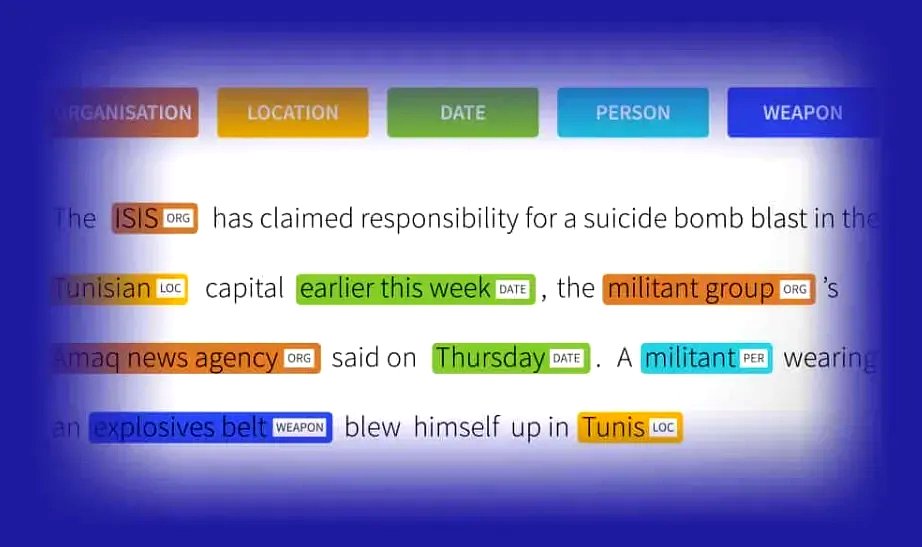

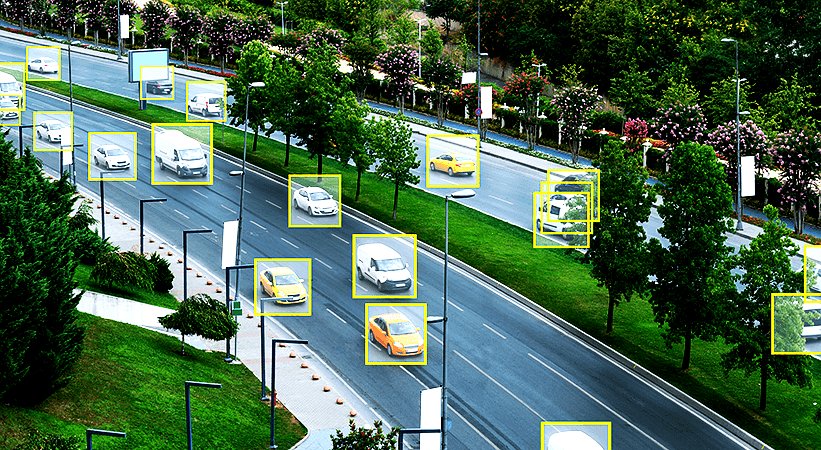

Our broadest category. Image, video, LiDAR, and text labeled by dedicated teams trained on your schema and held to your accuracy threshold. Six core techniques, one delivery discipline.

What we operate

Data annotation services, validation and QA, human in the loop AI operations, back office data work, and managed data teams. Delivered from a single Nairobi operation, by named teams, at enterprise standards.

Operating model

The services

From raw annotation through production grade AI operations, every category is delivered by the same managed team model, trained on your task, accountable to your pipeline.

Our broadest category. Image, video, LiDAR, and text labeled by dedicated teams trained on your schema and held to your accuracy threshold. Six core techniques, one delivery discipline.

A self-contained validation operation that plugs into any pipeline, ours or yours. Multi-tier review, inter-annotator agreement scoring, written accuracy guarantees. Applies across every data type we touch.

When your model is in production, it still needs human eyes. Content moderation pipelines, AI output review, transcription for training and live ops run by dedicated teams inside your workflow, not an offshore queue.

The unglamorous but critical layer: structured data operations that feed AI training. Extraction, enrichment, normalization handled by an operation that treats data hygiene as a deliverable, not a side effect.

The wrapper around everything else. A dedicated team that behaves as an extension of your AI org named delivery lead, embedded in your Slack or Linear, continuous delivery model. Not a project. An operation.

How engagements work

No spot work. We scope, staff, calibrate, and run continuously as an extension of your AI org for the lifetime of the engagement.

Pipeline review, volume targets, task type. A first conversation that returns something useful either way.

Schema walk-through, acceptance criteria, security alignment, SOW. Drafted with your data lead.

Team trained on your schema and calibrated against gold-standard sets before any production data moves.

Daily throughput with multi-tier QA, weekly IAA reporting, and a named delivery lead in your channels.

Expand headcount, add workflows, fork specialist subteams without renegotiating the operating model.

Typical ramp from first call to live production: 3–5 weeks. Calibration is never skipped it is the reason accuracy holds once we scale.

What we've delivered

Delivered for Windward, our longest running annotation client. Sustained across multiple pipeline generations.

Trained, calibrated, and retained in Nairobi across annotation, validation, HITL, and back office teams.

Inter-annotator agreement averaged across production accounts. Reported weekly, never retrofitted.

Across computer vision, NLP, and multimodal pipelines. Repeat engagements, not one off projects.

Scope with us

We read your task schema, your volume requirements, your QA standards. Then we staff and deliver against them as an extension of your AI org, not a vendor on a queue.